Zhicheng Zhang (张知诚)

.

Job Seeking

I am actively seeking exciting opportunities starting in Fall 2026. Please feel free to explore my [CV] for more information about my background and qualifications. If you’re interested, feel free to drop me an [Email], and I’ll get back to you promptly.

Bio

I’m a final-year PhD student from Nankai University, advised by Prof. Jufeng Yang of Computer Vision Lab. Before that, I received my bachelor’s degree from Xidian University in 2021. My research interests include computer vision and deep learning, particularly focusing on Video Generation and Multimodal LLM. Please feel free to make any suggestions. You can contact with me in following ways:

Email | CV | GitHub | Google Scholar | Wechat

news

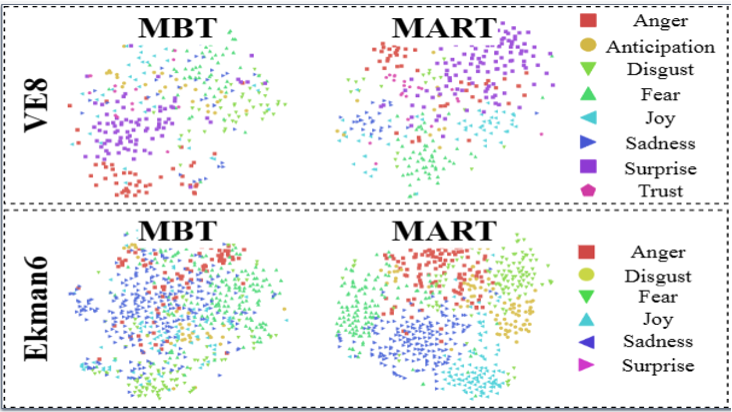

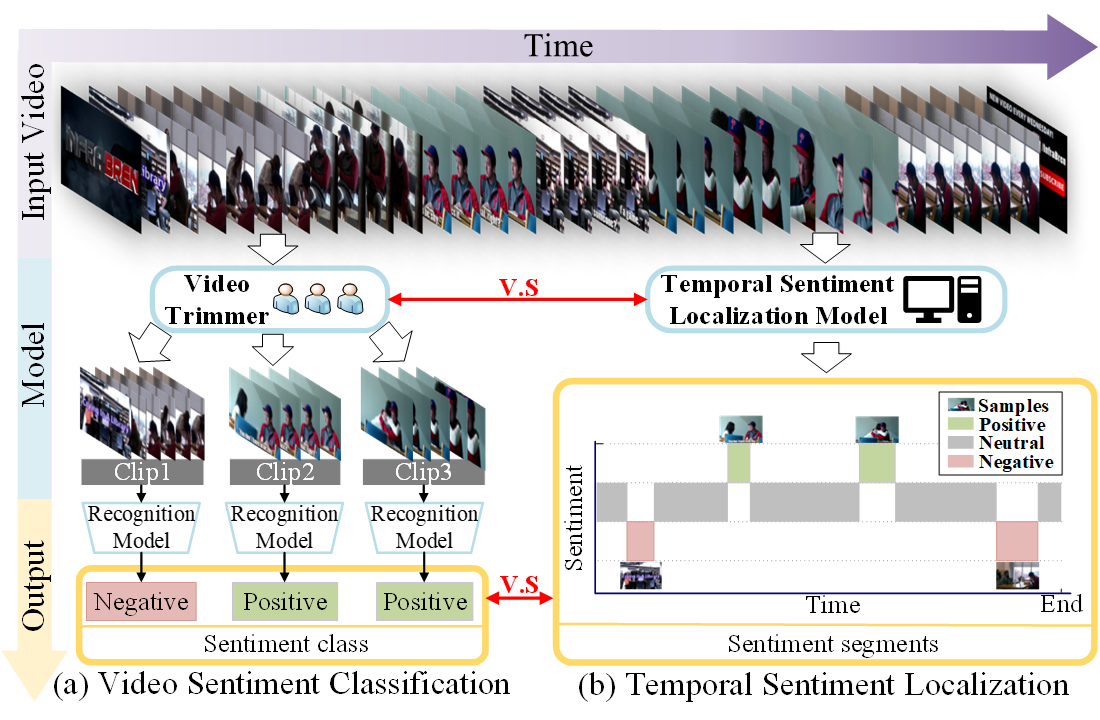

| Sep, 2025 | We built a new video large language model named VidEmo for video emotion analysis, and the paper was accepted by NeurIPS 2025 |

|---|---|

| Jun, 2025 | I’m heading to Zhuhai — a beautiful seaside city — to participate in VALSE 2025. |

| May, 2025 | We built a new multimodal large language model named MODA, and the paper was designated as ‘spotlight posters’ of ICML 2025 – representing the top 2.6% of all submissions. |

| Nov, 2024 | I am honored to have been selected for the inaugural (2024) “CIE–Tencent Ph.D. Research Incentive Program (Hunyuan LLM Special)”, with sincere thank to CIE and Tencent [link]. |

| Jul, 2024 | I’m invited to give a talk on the 11th CSIG student member sharing forum about Label-efficient video emotion analysis [link]. |

| Jun, 2024 | I’m going to Seattle for CVPR 2024. |

| Feb, 2024 | Three papers for video generation, video emotion analysis, and camouflaged image generation are accepted by CVPR 2024 |

| Oct, 2023 | I’m going to Paris for ICCV 2023. |

| Jul, 2023 | One paper for plane tracking is accepted by ICCV 2023 |

| May, 2023 | I receive the SK AI Innovation Scholarship |